Hi,

Today i am trying to show you what i have been playing with for the last day. There was a business case in which some colleagues from Analytics wanted to replicate all the data from other systems in their cluster.

We will start with this, two independent configured clusters with 3 servers each (on each server we have one zookeeper and one kafka node). On both the source and target i created a topic replicated three times with five partitions. You can find the description

/opt/kafka/bin/kafka-topics.sh --zookeeper localhost:2181 --describe --topic test-topic

Topic:test-topic PartitionCount:5 ReplicationFactor:3 Configs:

Topic: test-topic Partition: 0 Leader: 1002 Replicas: 1002,1003,1001 Isr: 1002,1003,1001

Topic: test-topic Partition: 1 Leader: 1003 Replicas: 1003,1001,1002 Isr: 1003,1001,1002

Topic: test-topic Partition: 2 Leader: 1001 Replicas: 1001,1002,1003 Isr: 1001,1002,1003

Topic: test-topic Partition: 3 Leader: 1002 Replicas: 1002,1001,1003 Isr: 1002,1001,1003

Topic: test-topic Partition: 4 Leader: 1003 Replicas: 1003,1002,1001 Isr: 1003,1002,1001

The command for creating this is actually pretty simple and it goes like this /opt/kafka/bin/kafka-topics.sh –zookeeper localhost:2181 –create –replication-factor 3 –partition 5 –topic test-topic

Once the topic are created on both kafka instances we will need to start Mirror Maker (HortonWorks recommends that the process should be created on the destination cluster). In order to do that, we will need to create two config files on the destination. You can call them producer.config and consumer.config.

For the consumer.config we have the following structure:

bootstrap.servers=source_node0:9092,source_node1:9092,source_node2:9092

exclude.internal.topics=true

group.id=test-consumer-group

client.id=mirror_maker_consumer

For the producer.config we have the following structure:

bootstrap.servers=destination_node0:9092,destination_node1:9092,destination_node2:9092

acks=1

batch.size=100

client.id=mirror_maker_producer

These are the principal requirements and also you will need to be sure that you have in you consumer.properties the following line group.id=test-consumer-group.

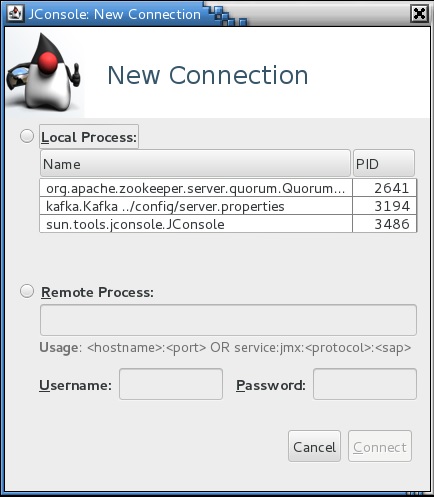

Ok, so far so good, now lets start Mirror Maker with and once started you can see it beside kafka and zookeeper using ps -ef | grep java

/opt/kafka/bin/kafka-run-class.sh kafka.tools.MirrorMaker --consumer.config ../config/consumer.config --producer.config ../config/producer.config --whitelist test-topic &To check the offset, at new versions of kafka you can always use

/opt/kafka/bin# ./kafka-run-class.sh kafka.admin.ConsumerGroupCommand --group test-consumer-group --bootstrap-server localhost:9092 --describe

GROUP TOPIC PARTITION CURRENT-OFFSET LOG-END-OFFSET LAG OWNER

test-consumer-group test-topic 0 2003 2003 0 test-consumer-group-0_/[dest_ip]

test-consumer-group test-topic 1 2002 2002 0 test-consumer-group-0_/[dest_ip]

test-consumer-group test-topic 2 2003 2003 0 test-consumer-group-0_/[dest_ip]

test-consumer-group test-topic 3 2004 2004 0 test-consumer-group-0_/[dest_ip]

test-consumer-group test-topic 4 2002 2002 0 test-consumer-group-0_/[dest_ip]

I tested the concept by running a short loop in bash to create 10000 records and put them to a file for i in $( seq 1 10000); do echo $i; done >> test.txt and this can be very easily imported on our producer by running the command /opt/kafka/bin/kafka-console-producer.sh –broker-list localhost:9092 –topic test-topic < test.txt

After this is finished, please feel free to take a look in the topic using /opt/kafka/bin/kafka-console-consumer.sh –bootstrap-server localhost:9092 –topic test-topic –from-beginning and you should see a lot of lines 🙂

Thank you for your time and if there are any parts that i missed, please reply.

Cheers!